Designing Trust in AI Products: Beyond Explainability

AI doesn’t just produce outputs. It produces uncertainty.

Traditional software is deterministic.

You click a button, you get a predictable outcome.

AI systems behave differently. They generate probabilities, predictions, and approximations.

That shift fundamentally changes the design problem.

The challenge is no longer usability.

It’s trust.

Why explainability isn’t enough

Explainable AI has become a popular topic in research and industry conversations. Authors like Kate Crawford and Timnit Gebru have highlighted ethical and transparency concerns in AI systems.

But in product environments, showing “how the model works” is rarely what builds trust.

Users don’t need model architecture details.

They need:

Predictability

Control

Feedback

Recovery options

Trust is behavioral, not technical.

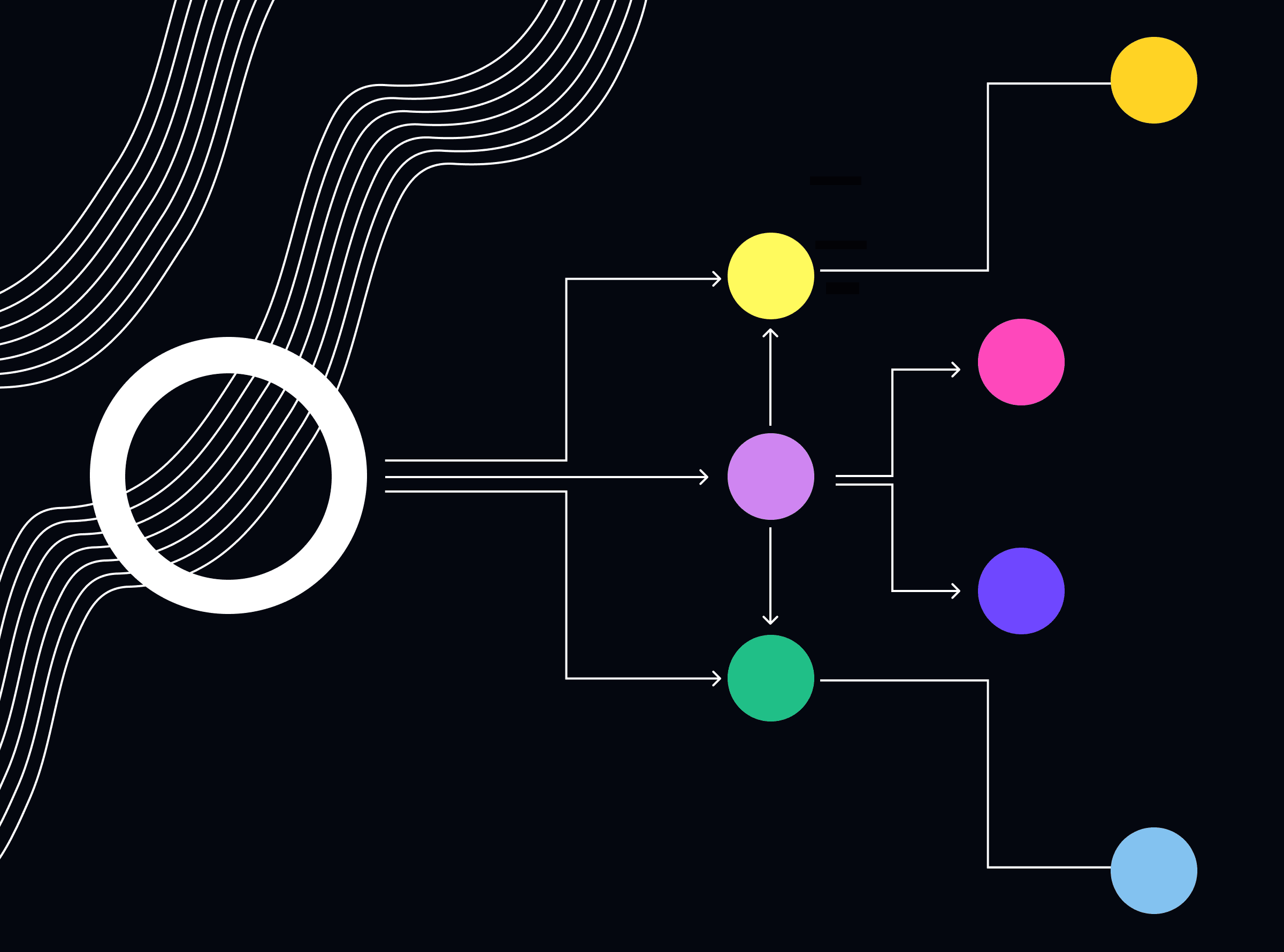

Trust is built through interaction patterns

Don Norman’s work in The Design of Everyday Things emphasizes feedback and visibility as core principles of good design.

In AI products, this translates to:

Confidence indicators (high, medium, low certainty)

Editable outputs

Clear system boundaries

Human-in-the-loop mechanisms

GitHub Copilot allows edits.

Notion AI lets users refine prompts.

Perplexity cites sources.

These patterns reduce perceived risk.

The role of recoverability

One of the most overlooked aspects of AI product design is recoverability.

If the system is wrong, can the user:

Correct it easily?

Understand why it failed?

Move forward without friction?

Jakob Nielsen’s usability heuristics emphasize error prevention and recovery.

AI products must elevate this principle even further.

A product that allows safe failure builds more trust than one that promises perfection.

Trust compounds over time

Trust in AI is not built in onboarding.

It emerges from consistent system behavior across repeated interactions.

Behavioral science shows that reliability and predictability increase confidence in uncertain environments.

AI products should therefore focus on:

Stable interaction patterns

Transparent limitations

Consistent tone and feedback

Gradual capability exposure

Trust is cumulative.

Designing for calibrated trust

The real goal is not blind trust.

It is calibrated trust.

Users should:

Rely on the system when appropriate

Question it when necessary

Understand its boundaries

Over-trust is as dangerous as distrust.

Well-designed AI products create informed reliance.

Takeaway

AI product design is not just about interface elegance or model performance.

It is about designing behavioral trust loops that make intelligent systems usable, reliable, and accountable.

In AI products, trust is the primary user experience.